At Google, we actively look for ways to ensure a safe user experience when making decisions about the ads people see and the content that can be monetized on our platforms. Developing policies in these areas and consistently enforcing them is one of the primary ways we keep people safe and preserve trust in the ads ecosystem.

2021 marks one decade of releasing our annual Ads Safety Report, which highlights the work we do to prevent malicious use of our ads platforms. Providing visibility on the ways we’re preventing policy violations in the ads ecosystem has long been a priority — and this year we’re sharing more data than ever before.

Our Ads Safety Report is just one way we provide transparency to people about how advertising works on our platforms. Last spring, we also introduced ouradvertiser identity verification program. We are currently verifying advertisers in more than 20 countries and have started to share the advertiser name and location in our About this ad feature, so that people know who is behind a specific ad and can make more informed decisions.

Enforcement at scale

In 2020, our policies and enforcement were put to the test as we collectively navigated a global pandemic, multiple elections around the world and the continued fight against bad actors looking for new ways to take advantage of people online. Thousands of Googlers worked around the clock to deliver a safe experience for users, creators, publishers and advertisers. We added or updated more than 40 policies for advertisers and publishers. We also blocked or removed approximately 3.1 billion ads for violating our policies and restricted an additional 6.4 billion ads.

Our enforcement is not one-size-fits-all, and this is the first year we’re sharing information on ad restrictions, a core part of our overall strategy. Restricting ads allows us to tailor our approach based on geography, local laws and our certification programs, so that approved ads only show where appropriate, regulated and legal. For example, we require online pharmacies to complete a certification program, and once certified, we only show their ads in specific countries where the online sale of prescription drugs is allowed. Over the past several years, we’ve seen an increase in country-specific ad regulations, and restricting ads allows us to help advertisers follow these requirements regionally with minimal impact on their broader campaigns.

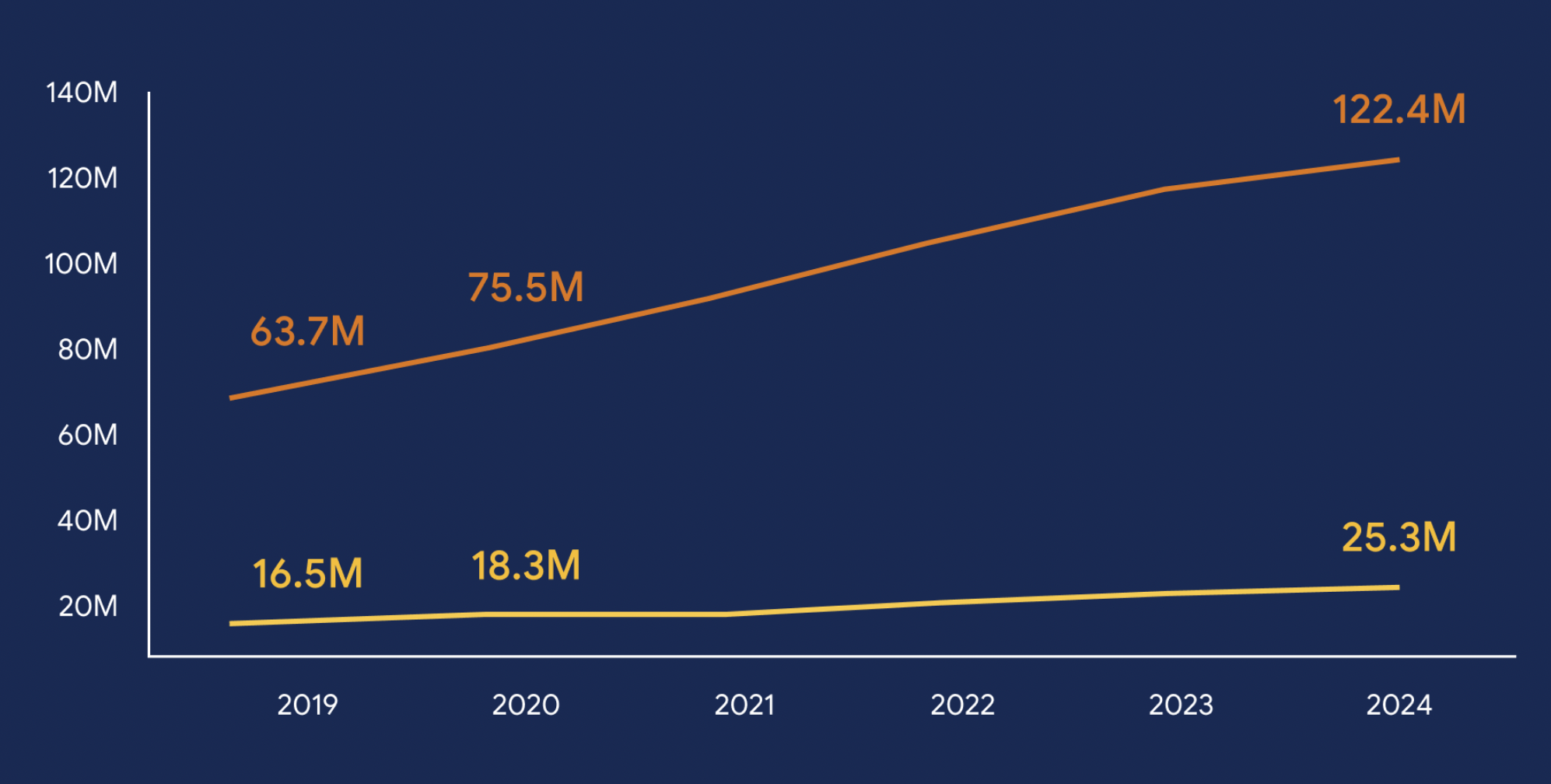

We also continued to invest in our automated detection technology to effectively scan the web for publisher policy compliance at scale. Due to this investment, along with several new policies, we vastly increased our enforcement and removed ads from 1.3 billion publisher pages in 2020, up from 21 million in 2019. We also stopped ads from serving on over 1.6 million publisher sites with pervasive or egregious violations.

Remaining nimble when faced with new threats

As the number of COVID-19 cases rose around the world last January, we enforced our sensitive events policy to prevent behavior like price-gouging on in-demand products like hand sanitizer, masks and paper goods, or ads promoting false cures. As we learned more about the virus and health organizations issued new guidance, we evolved our enforcement strategy to start allowing medical providers, health organizations, local governments and trusted businesses to surface critical updates and authoritative content, while still preventing opportunistic abuse. Additionally, as claims and conspiracies about the coronavirus’s origin and spread were circulated online, we launched a new policy to prohibit both ads and monetized content about COVID-19 or other global health emergencies that contradict scientific consensus.

In total, we blocked over 99 million Covid-related ads from serving throughout the year, including those for miracle cures, N95 masks due to supply shortages, and most recently, fake vaccine doses. We continue to be nimble, tracking bad actors’ behavior and learning from it. In doing so, we’re able to better prepare for future scams and claims that may arise.

Fighting the newest forms of fraud and scams

Often when we experience a major event like the pandemic, bad actors look for ways to to take advantage of people online. We saw an uptick in opportunistic advertising and fraudulent behavior from actors looking to mislead users last year. Increasingly, we’ve seen them use cloaking to hide from our detection, promote non-existent virtual businesses or run ads for phone-based scams to either hide from detection or lure unsuspecting consumers off our platforms with an aim to defraud them.

In 2020 we tackled this adversarial behavior in a few key ways:

-

Introduced multiple new policies and programs including our advertiser identity verification program and business operations verification program.

-

Invested in technology to better detect coordinated adversarial behavior, allowing us to connect the dots across accounts and suspend multiple bad actors at once.

-

Improved our automated detection technology and human review processes based on network signals, previous account activity, behavior patterns and user feedback.

The number of ad accounts we disabled for policy violations increased by 70% from 1 million to over 1.7 million. We also blocked or removed over 867 million ads for attempting to evade our detection systems, including cloaking, and an additional 101 million ads for violating our misrepresentation policies. That’s a total of over 968 million ads.

Protecting elections around the world

When it comes to elections around the world, ads help voters access authoritative information about the candidates and voting processes. Over the past few years, we introduced strict policies and restrictions around who can run election-related advertising on our platform and the ways they can target ads; we launched comprehensive political ad libraries in the U.S., the U.K., the European Union, India, Israel, Taiwan, Australia and New Zealand; and we worked diligently with our enforcement teams around the world to protect our platforms from abuse. Globally, we continue to expand our verification program and verified more than 5,400 additional election advertisers in 2020. In the U.S, as it became clear the outcome of the presidential election would not be determined immediately, we determined that the U.S election fell under our sensitive events policy, and enforced a U.S. political ads pause starting after the polls closed and continuing through early December. During that time, we temporarily paused more than five million ads and blocked ads on over three billion Search queries referencing the election, the candidates or its outcome. We made this decision to limit the potential for ads to amplify confusion in the post-election period.

Demonetizing hate and violence

Last year, news publishers played a critical role in keeping people informed, prepared and safe. We’re proud that digital advertising, including the tools we offer to connect advertisers and publishers, supports this content. We have policies in place to protect both brands and users.

In 2017, we developed more granular means of reviewing sites at the page level, including user-generated comments, to allow publishers to continue to operate their broader sites while protecting advertisers from negative placements by stopping persistent violations. In the years since introducing page-level action, we’ve continued to invest in our automated technology, and it was crucial in a year in which we saw an increase in hate speech and calls to violence online. This investment helped us to prevent harmful web content from monetizing. We took action on nearly 168 million pages under our dangerous and derogatory policy.

Continuing this work in 2021

We know that when we make decisions through the lens of user safety, it will benefit the broader ecosystem. Preserving trust for advertisers and publishers helps their businesses succeed in the long term. In the upcoming year, we will continue to invest in policies, our team of experts and enforcement technology to stay ahead of potential threats. We also remain steadfast on our path to scale our verification programs around the world in order to increase transparency and make more information about the ad experience universally available.